The Fascinating History of Computers

Computers have come a long way since their inception, evolving from bulky machines with limited capabilities to sleek, powerful devices that have become an integral part of our daily lives.

The history of computers dates back to the early 20th century, with the invention of mechanical calculating machines such as the Analytical Engine by Charles Babbage. Over the years, advancements in technology led to the development of electronic computers, which revolutionized industries such as business, science, and entertainment.

One of the key milestones in computer history was the creation of ENIAC (Electronic Numerical Integrator and Computer) in 1946, considered one of the first general-purpose electronic computers. This marked the beginning of a new era in computing, paving the way for further innovations and advancements.

In the following decades, computers continued to evolve rapidly, becoming smaller, faster, and more powerful. The introduction of personal computers in the 1970s and 1980s brought computing capabilities into homes and offices around the world, democratizing access to technology.

Today, we are surrounded by a vast array of computing devices, from smartphones and tablets to supercomputers and cloud servers. The history of computers is a testament to human ingenuity and innovation, showcasing our ability to push boundaries and redefine what is possible.

As we look towards the future, it is exciting to imagine what new developments lie ahead in the ever-evolving field of computer technology. One thing is certain: the history of computers will continue to shape our world in profound ways for generations to come.

5 Fascinating Milestones in Computer History: From ENIAC to the World Wide Web

- The first computer, ENIAC, was completed in 1945 and weighed over 27 tons.

- The invention of the microprocessor in 1971 revolutionized the computer industry.

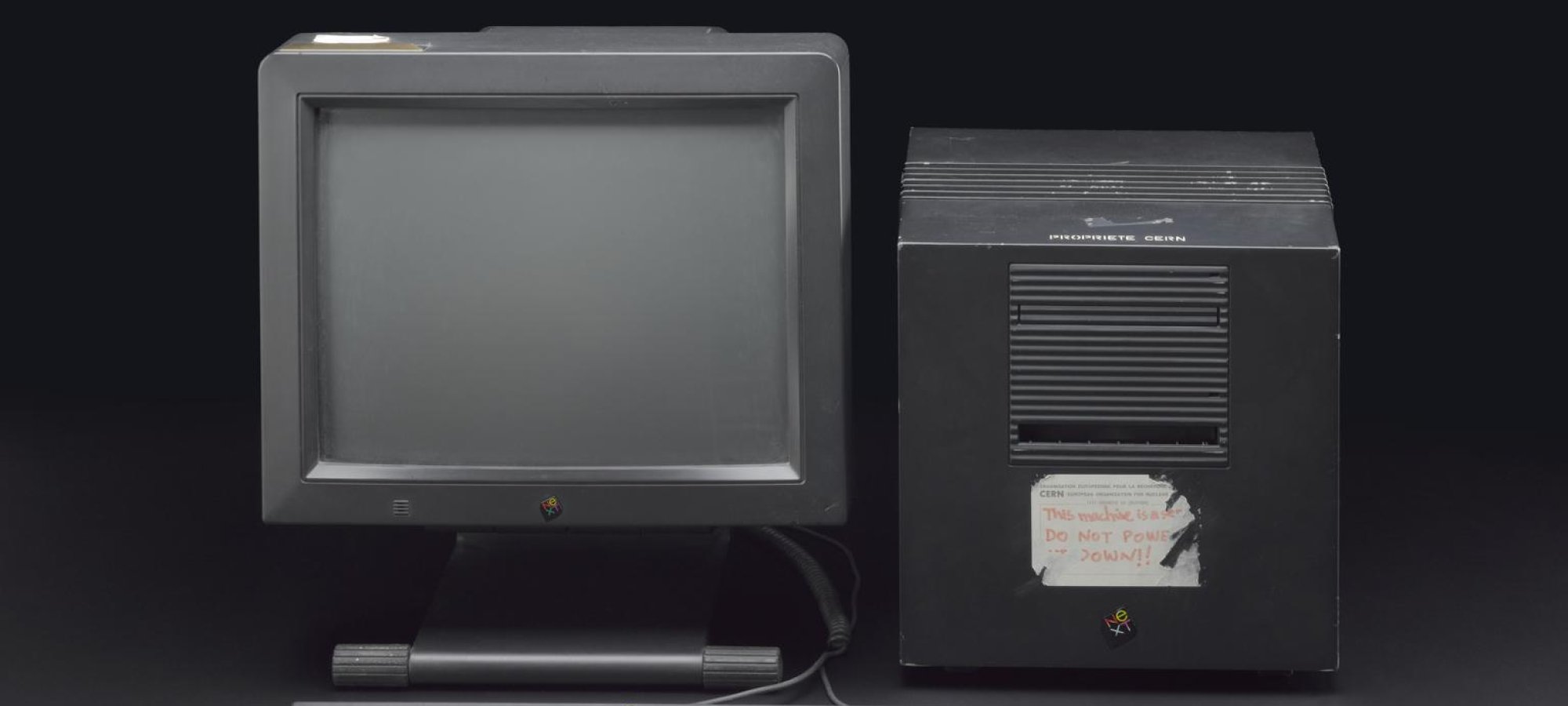

- The World Wide Web was created by Tim Berners-Lee in 1989.

- Apple released its first Macintosh computer in 1984, featuring a graphical user interface.

- The term ‘bug’ to describe a computer glitch originated in 1947 when a moth caused a malfunction in the Harvard Mark II computer.

The first computer, ENIAC, was completed in 1945 and weighed over 27 tons.

The completion of the first computer, ENIAC, in 1945 marked a significant milestone in the history of computing. This groundbreaking machine, weighing over 27 tons, represented the dawn of a new era in technology and innovation. ENIAC’s sheer size and computing power laid the foundation for future advancements in the field of computer science, shaping the course of history and paving the way for the digital age we live in today.

The invention of the microprocessor in 1971 revolutionized the computer industry.

The invention of the microprocessor in 1971 marked a pivotal moment in computer history, revolutionizing the industry by enabling the development of smaller, more powerful, and more energy-efficient computers. This breakthrough technology laid the foundation for the modern computing devices we rely on today, shaping the way we work, communicate, and interact with technology. The microprocessor’s impact continues to be felt across various sectors, driving innovation and pushing the boundaries of what is possible in the world of computing.

The World Wide Web was created by Tim Berners-Lee in 1989.

The World Wide Web, a revolutionary system that transformed the way we access and share information online, was created by Tim Berners-Lee in 1989. This groundbreaking invention laid the foundation for the modern internet as we know it today, enabling users to navigate websites, search for content, and connect with people across the globe. Tim Berners-Lee’s vision and innovation have had a profound impact on society, shaping the digital landscape and opening up endless possibilities for communication, collaboration, and knowledge sharing.

Apple released its first Macintosh computer in 1984, featuring a graphical user interface.

In 1984, Apple made a significant mark in computer history with the release of its first Macintosh computer, which introduced a groundbreaking graphical user interface. This innovation revolutionized the way users interacted with computers, making them more accessible and user-friendly. The Macintosh’s iconic design and intuitive interface set a new standard for personal computing and left a lasting impact on the industry, shaping the future of technology.

The term ‘bug’ to describe a computer glitch originated in 1947 when a moth caused a malfunction in the Harvard Mark II computer.

The term ‘bug’ to describe a computer glitch has an interesting origin dating back to 1947. It all started when a moth caused a malfunction in the Harvard Mark II computer, leading technicians to refer to the issue as a “bug.” This historical anecdote highlights the early days of computing and the creative ways in which pioneers dealt with technical challenges.